AI MODEL EVALUATION

Most AI video decisions get made on vibes. This isn't that.

I ran image and video generation models through the same prompts across three scenes for a mock Apple MacBook Neo concept via Higgsfield: same brief, every model, no shortcuts. The goal wasn't to find the best tool. It was to build a framework for knowing which tool wins in which situation, and why.

What I'm documenting:

-

Which image models produce the cleanest start frames for video generation

-

Where each video model excels or falls apart (motion quality, facial consistency, product accuracy)

-

When to switch models vs. when to iterate prompts vs. when you've hit a limitation

-

The QC criteria that make these calls repeatable for a team, not just gut instinct

SPOILER ALERT:

Nano Banana 2 won all image tests. Kling 3.0 won all video tests. Text rendering fails universally across all models.

Image Tests

Female Actor &

Citrus MacBook Neo

PROMPT:

Woman sits in an empty open plan modern tech office at night with soft amber lighting in the background. She sits, working late night on her Macbook Neo, typing, focused on screen, slight smile of concentration and confidence. Her hair is tied back, wearing a soft oversized cardigan, contrasting with the hard lines of the dimly lit office. Stone walls, single potted monstera in the background corner. Closeup from slight low angle, camera is positioned at a 3/4 angle from the front, framing from the torso up. Scene is soft, as if shot on an analogue camera using a compact anamorphic, cinematic lens.

KEY FINDINGS

BEST PERFORMER: Nano Banana 2

Nano Banana 2 outperformed all other models on all fronts. From the soft, natural placement of the lighting, realistic rendering of human features, and rendering of an office setting that would look natural to any kind of viewer, there were only minor flaws in smaller details. Overall, the image produced can be considered more than usable for the project.

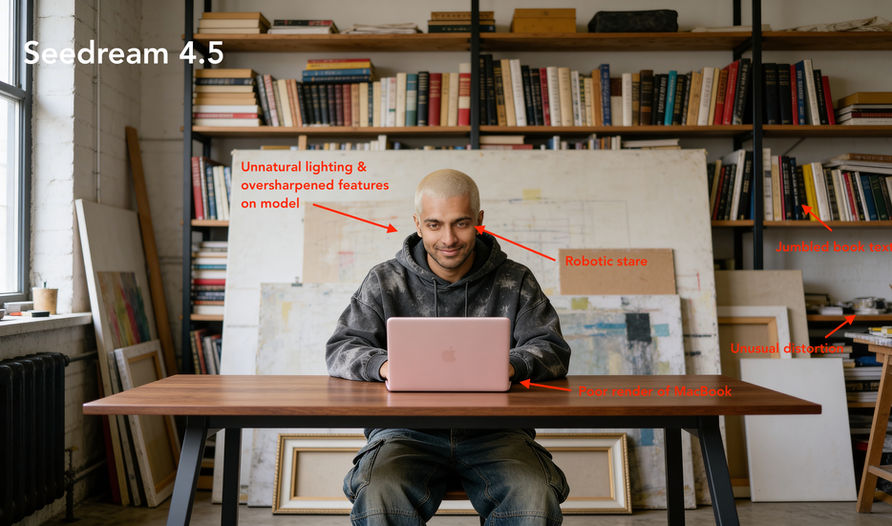

Male Actor &

Blush MacBook Neo

PROMPT:

Medium shot, directly in front of man sitting at a modern looking walnut desk. He sits in a lived-in loft creative studio, with books on shelves and unfinished canvases of art behind him. He's focused on the laptop working away on his Macbook Neo with a confident smirk on his face. He wears a dark grey acid wash oversized hoodie, and Japanese style denim baggy pants. Soft window lighting falls on the entire scene with soft shadows and cinematic grading.

KEY FINDINGS

BEST PERFORMER: Nano Banana Pro

Nano Banana Pro produced a near-perfect render of the prompt and intended vibe of the scene, with reruns of the prompt producing consistent results. Naturally lit, proper character rendering with facial expressions and fashion, and realistic bokeh. In regards to the bokeh, its inconsistent distribution keeps it from being an A+ result, but remains completely usable.

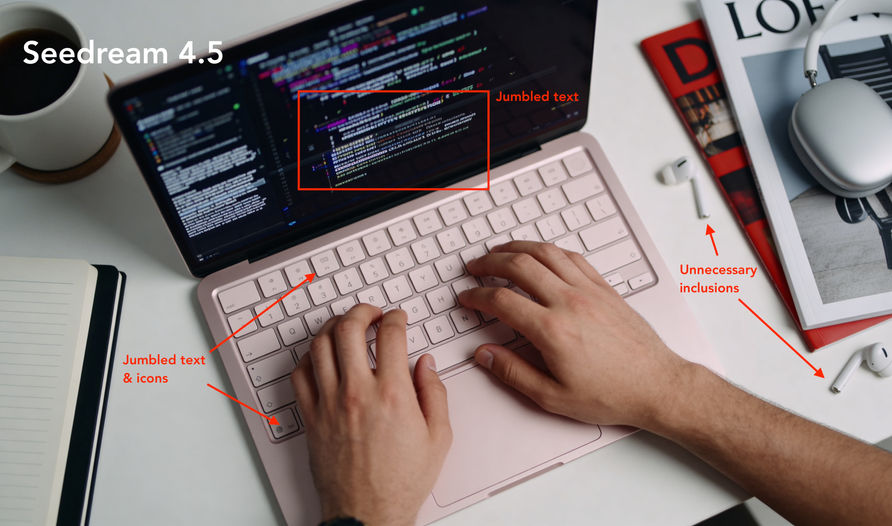

Overhead Shot &

Blush MacBook Neo

PROMPT:

Overhead top-down camera shot, close up. Hands are in-frame typing on the keyboard. An Xcode window is displayed on the screen, while the desk area surrounding the laptop is neat, with coffee in a mug, a journal, a midcentury modern lamp, succulent plant, and Airpod Max nearby with cinematic color grading.

KEY FINDINGS

BEST PERFORMER: Nano Banana 2

Nano Banana 2 once again produced the most usable result, though the overall lesson from this particular test was that no AI model should be used to generate scenes that involve a large amount of text and iconography on-screen. Nano Banana captured more of the cinematic feel and aesthetic of a typical Apple commercial while all others generated results that felt several generations behind.

TAKEAWAYS

-

Nano Banana 2 won consistently. Use it for start frames that need product accuracy and lighting control.

-

Text rendering (UI, screens, code) fails across every model. Don't try to fix it through prompting. Frame shots to exclude text or plan for post overlays from the start.

-

Complex backgrounds increase failure rates. Simplify prompts to one background element, add visual richness in post if needed.

-

At scale, document which models handle which scenarios so teams pick the right tool before generating, not after reviewing failures.

Video Tests

Female Model & Video Generation

PROMPT:

Woman confidently types away on her Macbook Neo, using only the keyboard and track pad. Camera pulls in, slow and subtle motion. Slight head tilt as a window opens on screen, as its glow subtly illuminates her face.

KEY FINDINGS

Kling 3.0 created the most natural result for the given scenario. With a great balance of steady camera movement, realistic micro-expressions, and natural hand movement, the output was more than useable.

Minimax 2.3 produced unnatural movement that gave the video a feeling of being played in reverse. Veo 3 generated completely unnatural finger movement and keyboard use, though it did introduce an interesting subtle handheld feel to its camera movement. The worst performer was Seedance 1.5 Pro, producing inaccurate product rendering, unnatural typing movement, and what looks like a low bitrate output, producing color banding and noise in the footage.

Male Model & Video Generation

PROMPT:

Camera slowly pulls out as the man finishes work on the Macbook Neo. He nods confidently as he finalizes his project, closes the laptop, then sits back and places his hands behind his head, rubs it, and relaxes.

KEY FINDINGS

Kling 3.0 created the most natural result for the given scenario with a great balance of steady camera movement, realistic micro-expressions, and natural hand movement. With the exception of a minor inaccuracy with the product render, the output was more than usable.

Minimax 2.3 had our model interacting with the MacBook as if it was an iPad. Veo 3.1 has the product closing before the model's hands initiated the movement on top of looking slightly over processed. Seedance 1.5 Pro's output came in last with bizarre results that went from unnatural movement, to inaccurate product rendering, and ending with subtle facial drift, making the model look like a completely different person.

Overhead Shot &

Video Generation

PROMPT:

Camera slowly pulls out as hands type enthusiastically on keyboard while also using the track pad. The text displayed in the dark window updates as the user types a line of code. Nothing else on the screen should be changing. The user's left hand then reaches for the coffee.

KEY FINDINGS

Despite concluding that generative AI completely falls flat when it has to create a scene with several instances of text at once, I was curious to see what would happen with movement introduced.

Unsurprisingly, Kling 3.0 once again came out on top not only being the most accurate when it came to the prompt, but also introduced an interesting subtle table shake while the model interacted with the MacBook, giving the scene a more realistic feel.

Veo 3.1 generated unnatural movement as well as unwanted text movement on the screen. Seedance 1.5 Pro was the worst at generating on-screen movement, turning many parts of it into globs of text, and both the UI and hands doing completely weird things. Minimax 2.3 produced the most unexpected results, with the camera completely pulling out of the frame and exposing a whole other person using the MacBook with weird hand movements, product accuracy anomalies, and poor text on display, consistent across all models.

TAKEAWAYS

-

Kling 3.0 produced the most natural human motion. Use it for talent-driven content.

-

Hand-object interaction is the weakest area across all models. Frame to minimize visible contact if possible.

-

Facial drift (Seedance) is a disqualifying failure for brand work. Make facial consistency a hard pass/fail checkpoint.

-

Product accuracy degrades during motion across all models. Lock product details in the start frame, optimize video for realistic movement instead.

-

At scale, model selection mistakes are expensive. Build a decision matrix so teams route content to the right model before generation, not after failures.